Lead author of 'Attention Is All You Need'

Ashish Vaswani

Profile

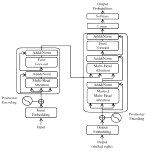

Ashish Vaswani is the lead author of “Attention Is All You Need” — the 2017 paper that introduced the Transformer and quietly rewired the entire field of AI. If you’ve used GPT, Claude, Gemini, Stable Diffusion, or virtually any modern model, you’ve used his architecture. The paper has accumulated over 200,000 citations and counting, making it one of the most influential pieces of computer science research of the century.

Vaswani earned his PhD at USC under David Chiang working on statistical machine translation, then spent five-plus years at Google Brain where the Transformer was born. He left Google in 2021 to co-found Adept AI with fellow Transformer co-author Niki Parmar, then walked away from that company in late 2022 — reportedly over disagreements with investors. In 2023 he and Parmar started over with Essential AI, where Vaswani is now CEO.

Essential raised $56.5M in 2023 from a who’s-who of AI infrastructure — Nvidia, AMD, Google, and Thrive Capital among them. The pitch was building enterprise AI agents, but the company stayed quiet for two years before shipping anything substantial. In December 2025 they finally released Rnj-1, an 8B open-weights base + instruct pair trained on 8.4T tokens with the Muon optimizer (not AdamW), released under Apache 2.0. It hits ~20.8% on SWE-bench Verified — competitive with Gemini 2.0 Flash and Qwen2.5-Coder 32B at a fraction of the size — and was deliberately built with minimal post-training and no RL, betting that disciplined pretraining beats clever fine-tuning.

For developers learning AI: Vaswani is interesting precisely because he’s not a celebrity researcher. He doesn’t tweet much, doesn’t podcast, doesn’t evangelize. He shipped one paper that changed everything, then put his head down and started a company. Watch what Essential AI ships — when one of the people who invented the Transformer bets against the post-training-heavy industry consensus, it’s worth paying attention.

Key Articles & Papers

Attention Is All You Need Self-Attention with Relative Position Representations Image Transformer Tensor2Tensor for Neural Machine Translation Stand-Alone Self-Attention in Vision Models Announcing Rnj-1: Building Instruments of IntelligenceSpotify Podcasts