Amazon Frontier AI & Robotics researcher, Berkeley professor

Pieter Abbeel

Profile

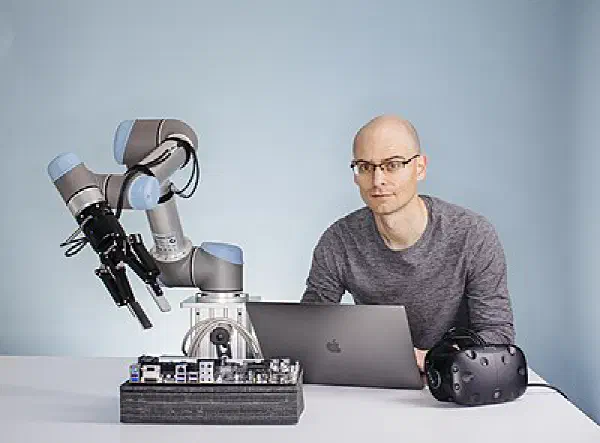

Pieter Abbeel is the professor who made deep reinforcement learning for robots a real research field, and his lab at UC Berkeley is arguably the most productive pipeline of robot-learning talent in the world. Trained at Stanford under Andrew Ng — where his 2004 paper on apprenticeship learning via inverse RL became a foundational reference — he moved to Berkeley in 2008 and built the Robot Learning Lab inside BAIR. From there came a steady drumbeat of results that pushed robots from scripted industrial arms toward systems that learn from experience.

If you’ve used modern RL, you’ve used his code. Trust Region Policy Optimization, Generalized Advantage Estimation, Proximal Policy Optimization, domain randomization for sim-to-real transfer, hindsight experience replay — all came out of work he led or co-authored, much of it with his students during the OpenAI collaboration years. His academic tree is extraordinary: Sergey Levine, Chelsea Finn, John Schulman, Karol Hausman, Jim Fan, Igor Mordatch, Rocky Duan. Pick a serious robot-learning shop today — Google, Meta, Physical Intelligence, Skild, a half-dozen startups — and you’ll find an Abbeel alumnus running it or advising it.

In 2017 he co-founded Covariant, which focused on AI-powered warehouse picking. The company shipped real systems into real warehouses, which is harder than most people realize, and in 2024 Amazon hired Abbeel and the core team in one of those quasi-acquisitions that have become common for frontier AI labs. He remains a Berkeley professor and hosts The Robot Brains Podcast, which has become one of the better long-form interview shows for people who want to understand what’s actually happening at the intersection of AI and embodiment.

For developers getting into AI, Abbeel is the person whose papers you read to understand how policy gradients and sim-to-real actually work — not just the math, but the engineering tricks that make them usable. He talks about robotics with a clarity that cuts through the hype cycles, which matters because robot learning is in one of its louder ones right now.

Key Articles & Papers

Apprenticeship Learning via Inverse Reinforcement Learning Trust Region Policy Optimization High-Dimensional Continuous Control Using Generalized Advantage Estimation End-to-End Training of Deep Visuomotor Policies RL^2: Fast Reinforcement Learning via Slow Reinforcement Learning Domain Randomization for Transferring Deep Neural Networks from Simulation to the Real World Proximal Policy Optimization Algorithms Hindsight Experience Replay Model-Agnostic Meta-Learning Decision Transformer: Reinforcement Learning via Sequence ModelingYouTube

Spotify Podcasts