Nvidia Chief Software Architect, Groq founder and LPU creator

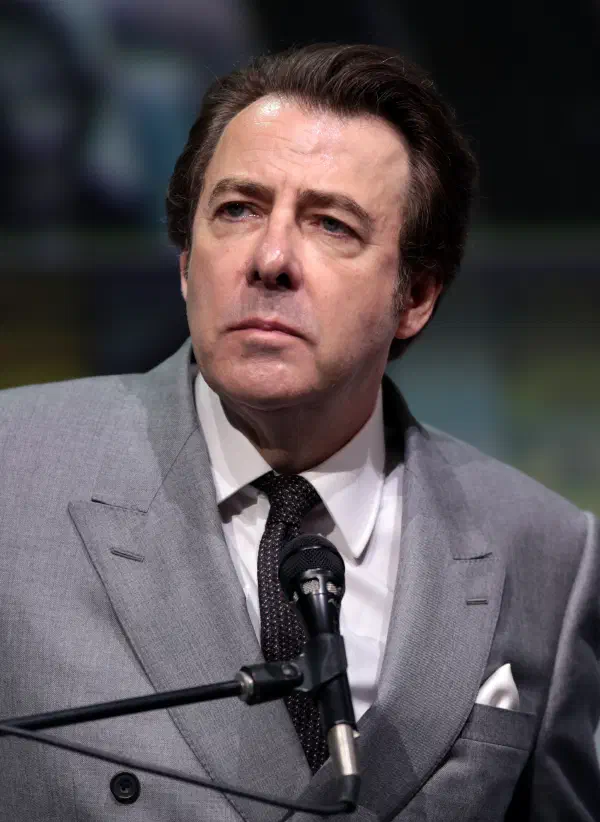

Jonathan Ross

Profile

Jonathan Ross has the rare distinction of having designed two of the most consequential AI chips ever shipped. While at Google, he started what became the Tensor Processing Unit as a 20% project, building the core of a chip that would eventually power more than half of Google’s internal compute. He left in 2016 to found Groq, a high school dropout who had already reshaped AI hardware once and wanted to do it again — this time for inference.

Groq’s bet was architectural heresy. Where Jensen Huang’s Nvidia GPUs are general-purpose massively parallel machines optimized for training, Ross built the Language Processing Unit (LPU) — a deterministic, single-threaded streaming processor with the memory baked onto the die. The result is inference latency that GPUs cannot match. If you’ve ever hit Groq’s API and watched a Llama model fire out hundreds of tokens per second with no perceptible delay, that’s the LPU. For developers, it changed what “real-time LLM” actually means — voice agents, live copilots, and agentic loops that were too slow on GPUs suddenly became viable.

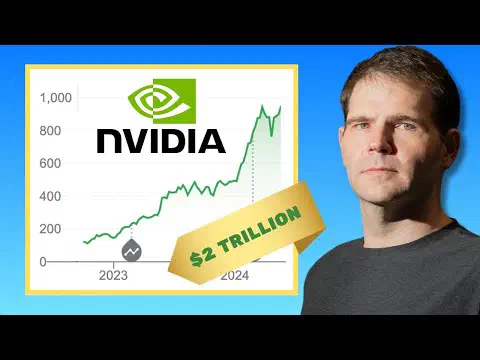

The commercial trajectory followed the tech. A $1.5B commitment from Saudi Arabia in early 2025 funded a Dammam data center. A $750M round in September 2025 valued Groq at $6.9B. Then in December 2025, Nvidia did something it had never done before: it paid roughly $20 billion — its largest deal on record — to license Groq’s inference tech and hire Ross along with most of his leadership team. The “non-exclusive licensing” framing preserved the fiction of competition, but the signal was unmistakable. The company whose GPUs defined modern AI quietly admitted that when it comes to inference, the LPU was right.

Ross is now Chief Software Architect at Nvidia, working on what he’s called a mission to double the world’s AI compute. For builders, the lesson is practical: inference is becoming a first-class engineering problem separate from training, and the people who treat it that way — architecturally, not just as a smaller version of the training stack — are the ones reshaping the economics of running models.

Key Articles & Papers

Groq and Nvidia Enter Non-Exclusive Inference Technology Licensing Agreement Every. Word. Matters. The Future of AI Compute: A Conversation With Jonathan RossVideos

YouTube

Spotify Podcasts