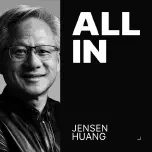

NVIDIA CEO and co-founder, AI infrastructure pioneer

Jensen Huang

Biographies

Profile

Jensen Huang co-founded NVIDIA in 1993 at a Denny’s in San Jose, betting on a future where specialized graphics chips would matter. For the first decade and a half, that bet was about video games. Then, in 2006, NVIDIA launched CUDA — a programming model that let developers use GPUs for general computation. Almost nobody asked for it. Jensen pushed it anyway, reportedly spending billions on a platform with no clear market. It’s the single most consequential product decision in modern computing.

When Geoffrey Hinton’s students trained AlexNet on two GeForce cards in 2012, the deep learning era started on Jensen’s hardware — by accident of his preparation. From there, NVIDIA stopped being a gaming company that also did compute and became the compute company. He personally hand-delivered the first DGX-1 to OpenAI in 2016. The H100, A100, and now Blackwell GPUs are the substrate that every major foundation model — GPT, Claude, Gemini, Llama — is trained on. If you’re using an AI API today, you’re renting time on Jensen’s silicon.

What makes him different from most CEOs is that he’s still the engineer in the room. He flattens his org chart (reportedly around 60 direct reports), reads his own email, and narrates the technical roadmap himself in multi-hour GTC keynotes wearing the same leather jacket. His memos are legendary for their specificity — no corporate hedge, no MBA abstractions. The company’s moat isn’t just the chips; it’s CUDA, cuDNN, NCCL, TensorRT, and a decade of libraries that make porting away excruciating. AMD has better price/performance on paper. It doesn’t matter. The software is the lock-in.

For developers learning AI: Jensen matters because he controls the bottleneck. Every decision about which model to train, how long it takes, and what it costs flows through supply decisions made inside NVIDIA. If you want to understand why Anthropic raised on a valuation that assumes compute scarcity, why hyperscalers are building their own accelerators (TPU, Trainium, MTIA), and why export controls on GPUs became geopolitics — start with Jensen. He didn’t predict the AI revolution. He built the infrastructure that made it possible, then kept raising the ceiling.

Key Articles & Papers

Jensen Huang's 2024 Stanford GSB Talk: Pain and Suffering Acquired Podcast: NVIDIA — The Dawn of the AI Era (Jensen Interview) Caltech Commencement Address NVIDIA GTC 2024 Keynote: Blackwell Unveiled NVIDIA 2024 Annual ReportControversies

- Export controls & China: NVIDIA has designed multiple chip variants (A800, H800, H20) to stay just under US export restrictions to China, drawing congressional scrutiny. Jensen has publicly argued against aggressive restrictions, calling them counterproductive.

- Market concentration: NVIDIA’s ~80%+ share of AI training silicon has drawn antitrust attention from the DOJ and EU regulators, particularly around CUDA bundling and allocation practices during the 2023–2024 supply crunch.

- Supply allocation favoritism: Reports during peak GPU scarcity alleged NVIDIA prioritized certain customers (hyperscalers, politically connected labs) over others. NVIDIA has denied preferential allocation.

- Crypto era inventory: Faced an SEC settlement in 2022 over inadequate disclosure of how much GeForce revenue came from crypto mining before the 2018 crash.

YouTube

Spotify Podcasts