EleutherAI Executive Director, open-source AI researcher

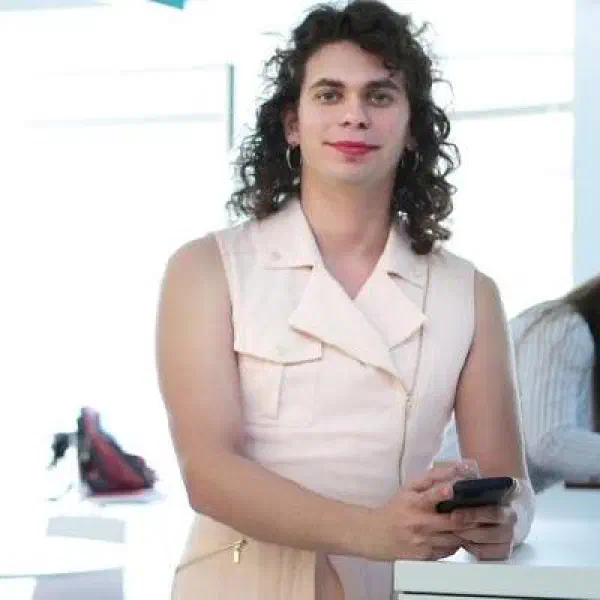

Stella Biderman

Profile

Stella Biderman is the Executive Director of EleutherAI, the open-source research collective that pulled off something that wasn’t supposed to be possible: building competitive large language models outside of a frontier lab. Before EleutherAI incorporated as a non-profit in 2023, it was a Discord server. Biderman is the reason it became an actual research institute with an actual mission — keeping the study of large models in reach of academics, auditors, and independent researchers who don’t want to partner with the companies selling them.

She’s a mathematician by training (University of Chicago, with a philosophy degree alongside it) and picked up an M.S. in CS from Georgia Tech in 2022 while doing day-job work as a research scientist at Booz Allen Hamilton. While Sid Black, Leo Gao, and Connor Leahy founded EleutherAI in 2020, Biderman became a central figure almost immediately — co-authoring The Pile, the 825GB open dataset that a generation of open models would be trained on, and then driving the releases of GPT-Neo, GPT-NeoX-20B, and Pythia.

Pythia is the work that matters most if you care about how these models actually behave. It’s a suite of 16 LLMs from 70M to 12B parameters, all trained on the same data in the same order, with 154 public checkpoints per model. That means researchers can study how behaviors emerge during training — memorization, bias, capability jumps — in a way that’s impossible with closed models. It’s the closest thing the field has to a controlled experiment on LLM development, and it exists because Biderman and her collaborators decided the field needed it.

For developers learning AI, she’s worth following because she’s one of the few senior people in the field treating openness as a technical requirement rather than a marketing position. She’s testified before Congress on open-source AI policy, contributed to the BLOOM multilingual model through BigScience, and consistently argues — with receipts — that reproducible science and open weights aren’t at odds with safety. If you use a non-OpenAI, non-Anthropic model today, there’s a decent chance the path from research to your laptop went through her work.

Key Articles & Papers

The Pile: An 800GB Dataset of Diverse Text for Language Modeling GPT-NeoX-20B: An Open-Source Autoregressive Language Model Pythia: A Suite for Analyzing Large Language Models Across Training and Scaling BLOOM: A 176B-Parameter Open-Access Multilingual Language Model Datasheet for the Pile Emergent and Predictable Memorization in Large Language ModelsYouTube

Spotify Podcasts