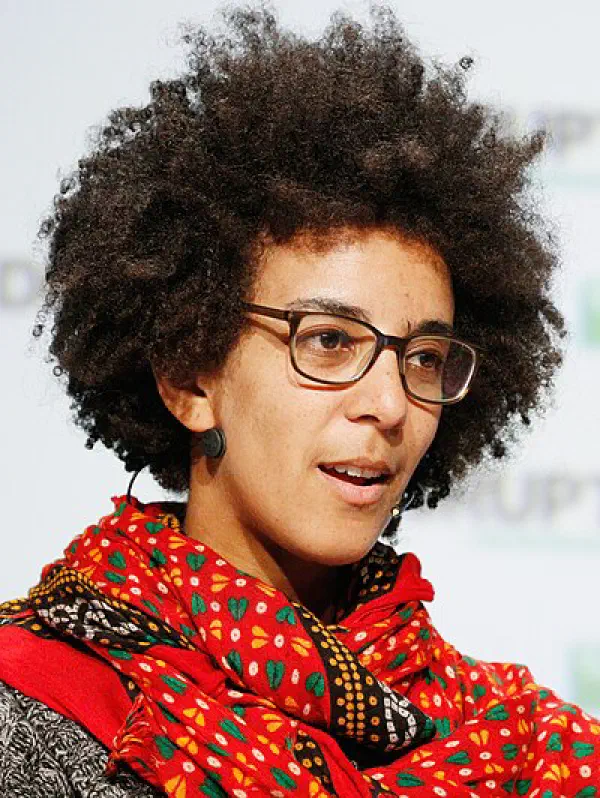

DAIR founder and Executive Director, AI ethics researcher

Timnit Gebru

Profile

Timnit Gebru is one of the most consequential figures in AI ethics, not because she invented a technique but because she named what the field was doing wrong and refused to stop saying it. She’s a computer scientist, founder of the Distributed AI Research Institute (DAIR), and — fairly or not — the person whose firing turned “AI ethics” from a corporate checkbox into a mainstream fight.

Born in Ethiopia to Eritrean parents, she arrived in the US as a political refugee, studied electrical engineering at Stanford, and did her PhD in computer vision under Fei-Fei Li. Her early work mined Google Street View imagery to infer demographic patterns — impressive as computer vision, but it also set her up to see what the rest of the field was missing. At Microsoft Research she co-authored Gender Shades with Joy Buolamwini, the study that showed commercial facial recognition systems were dramatically worse at identifying darker-skinned women. It’s one of the most cited pieces of AI fairness research ever, and it changed what IBM, Microsoft, and Amazon were willing to ship. She also co-founded Black in AI with Rediet Abebe.

Then came 2020. As co-lead of Google’s Ethical AI team, Gebru co-authored “On the Dangers of Stochastic Parrots” with Margaret Mitchell, Emily Bender, and Angelina McMillan-Major — a paper arguing that scaling language models up indefinitely carried risks around environmental cost, opacity, embedded bias, and the illusion of understanding. Google demanded she retract it or strip her name. She asked for transparency about who had made the decision; the company fired her instead (Google called it a resignation; Jeff Dean said the paper “didn’t meet our bar”). Thousands of Google employees and academics signed open letters. The paper’s warnings — disinformation, hallucination, bias laundering — now read like a field manual for everything that happened when ChatGPT landed two years later.

In 2021 she launched DAIR as a deliberately independent, community-grounded research institute focused on how AI harms marginalized groups, particularly in Africa and the African diaspora. She’s become a vocal critic of the AGI/existential-risk framing pushed by the OpenAI/Anthropic wing of the industry, arguing that it’s a distraction from concrete present-day harms — labor exploitation in data annotation, surveillance, and systems that encode historical bias at scale. For developers: her work is the reason you should think twice about what’s in your training data, who labeled it, and who gets hurt when your model is wrong. The “stochastic parrots” framing remains one of the clearest intellectual tools we have for thinking about what LLMs actually are.

Key Articles & Papers

On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification Datasheets for Datasets Model Cards for Model Reporting Using deep learning and Google Street View to estimate the demographic makeup of neighborhoods across the United States Race and Gender (chapter in The Oxford Handbook of Ethics of AI) The Exploited Labor Behind Artificial Intelligence TESCREAL Bundle: Eugenics and the Promise of Utopia through Artificial General Intelligence Hierarchy of knowledge: An Ethiopian perspective on what it means to participate in the data economyControversies

Firing from Google (December 2020): Google forced Gebru out after she refused to retract or strip her name from the Stochastic Parrots paper without a review-process explanation. Google characterized it as a resignation; Gebru and ~2,700 Google employees who signed a protest letter called it a firing. The episode ended Google’s credibility on internal AI ethics research and led directly to Margaret Mitchell’s subsequent firing.

TESCREAL and the AGI debate: Her “TESCREAL bundle” framing — lumping transhumanism, effective altruism, and longtermism into a single ideological package she argues has eugenic roots — is sharp, combative, and contested. Critics say it flattens distinct traditions; supporters say it names a real pattern in how AGI rhetoric functions. Either way, she’s the loudest voice arguing that the industry’s existential-risk framing serves to distract from present harms.

YouTube

Spotify Podcasts