Stanford NLP pioneer, SAIL director, Siebel Professor

Chris Manning

Profile

Chris Manning is the quiet center of gravity in natural language processing. As the inaugural Thomas M. Siebel Professor in Machine Learning at Stanford, director of the Stanford AI Lab (SAIL), and associate director of Stanford HAI, he sits at the intersection of linguistics and computer science — trained as a linguist (PhD under Joan Bresnan, 1994), then pivoted into the statistical and neural revolutions that rewrote his field. If you learned NLP at a university anywhere in the world in the last twenty years, you almost certainly learned it from his textbook.

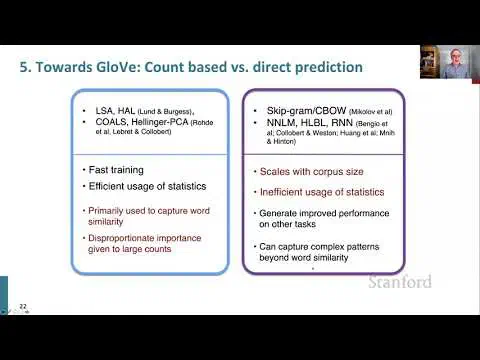

His research output reads like a tour of the last decade of NLP. He co-created GloVe, the word-vector model that became a reference point for lexical semantics. He developed the bilinear form of attention now baked into every transformer. He published foundational work on neural machine translation, dependency parsing, tree-recursive networks, and self-supervised pre-training. The field recognized it: three consecutive ACL Test of Time Awards (2023–2025) and the IEEE John von Neumann Medal in 2024.

But the more interesting thing about Manning is the student tree. His advisees include Richard Socher (now CEO of You.com), Dan Klein (Berkeley), Danqi Chen (Princeton), and many others running labs and companies across the industry. His course CS224N: Natural Language Processing with Deep Learning — taught every year, posted free on YouTube — has become the canonical on-ramp to modern NLP. When someone says “I took 224N to learn transformers,” they mean his lectures.

For developers today, Manning matters because he represents the thread connecting symbolic linguistics to deep learning to LLMs. He’s not a hype merchant and not a doomer — he’s the guy who built the ladder most of the field climbed. If you’re serious about understanding how language models got here, start with his course.

Books

Key Articles & Papers

GloVe: Global Vectors for Word Representation Effective Approaches to Attention-based Neural Machine Translation A Fast and Accurate Dependency Parser using Neural Networks Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators Emergent linguistic structure in artificial neural networks trained by self-supervision Stanza: A Python NLP Toolkit for Many Human LanguagesVideos

YouTube

Spotify Podcasts