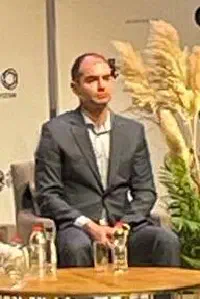

Safe Superintelligence founder and CEO, superintelligence researcher

Ilya Sutskever

Profile

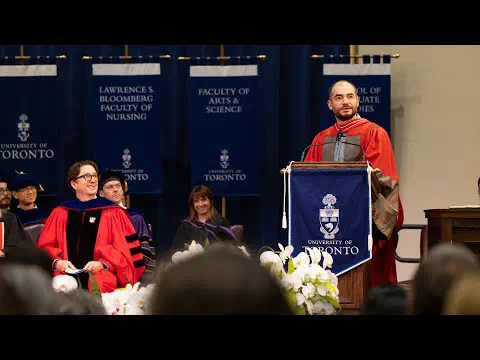

Ilya Sutskever is arguably the most technically influential mind of the modern AI era. Hinton’s student at the University of Toronto, he co-authored AlexNet in 2012 — the paper that effectively kicked off the deep learning revolution. He followed it with seq2seq learning at Google Brain in 2014, then in 2015 co-founded OpenAI with Sam Altman, Elon Musk, Greg Brockman, and others. For nearly a decade as Chief Scientist he shaped everything from GPT-2 through GPT-4.

If you’ve used ChatGPT, you’ve used something Ilya helped build. More importantly, he saw scaling before almost anyone else — the unreasonable bet that if you just keep throwing compute and data at neural networks, something remarkable emerges. That intuition, more than any single architectural trick, is what made the current LLM era possible. Geoffrey Hinton has repeatedly described him as the best student he ever had.

In November 2023 Ilya was on the OpenAI board that briefly fired Altman — a five-day corporate earthquake that ended with Altman reinstated and Ilya’s influence at the company effectively gone. He left formally in May 2024. A month later he co-founded Safe Superintelligence Inc. (SSI) with Daniel Gross and Daniel Levy. The pitch is single-minded: one product, safe superintelligence, no intermediate commercial distractions. It reportedly raised at a $5B valuation almost immediately, then $30B+ within a year — with essentially zero public output.

For developers, Ilya is the pattern of what a research-first mind looks like in an industry obsessed with demos and Twitter presence. He isn’t loud, isn’t podcasting, isn’t shipping. When he does speak — his NeurIPS 2024 keynote where he declared “pre-training as we know it will end” — people listen, because he’s been right before. His move to SSI is a bet worth watching carefully: he clearly believes superintelligence is a near-term engineering problem, and that safety is the thing worth optimizing for now.

Key Articles & Papers

ImageNet Classification with Deep Convolutional Neural Networks (AlexNet) Sequence to Sequence Learning with Neural Networks Generating Text with Recurrent Neural Networks Language Models are Few-Shot Learners (GPT-3) Introducing Superalignment Safe Superintelligence Inc. — founding statementControversies

The OpenAI board crisis (November 2023). Ilya was one of four board members who voted to fire Sam Altman, citing that he had not been “consistently candid.” Within days, employee pressure and Microsoft’s backing reversed the decision. Ilya publicly expressed regret (“I deeply regret my participation in the board’s actions”), stepped off the board, and went silent for months before leaving OpenAI in May 2024. The episode remains one of the most consequential boardroom events in tech history and is still only partly understood from public reporting.

Dissolution of the Superalignment team. After Ilya’s departure, the superalignment team he co-led with Jan Leike was dissolved. Leike left publicly, saying safety had “taken a backseat to shiny products.” Whether this vindicates Ilya’s decision to start SSI or reflects a deeper failure of governance at OpenAI is a matter of perspective — but it’s the context against which SSI should be read.

YouTube

Spotify Podcasts