Anthropic Head of Public Benefit, co-founder and policy leader

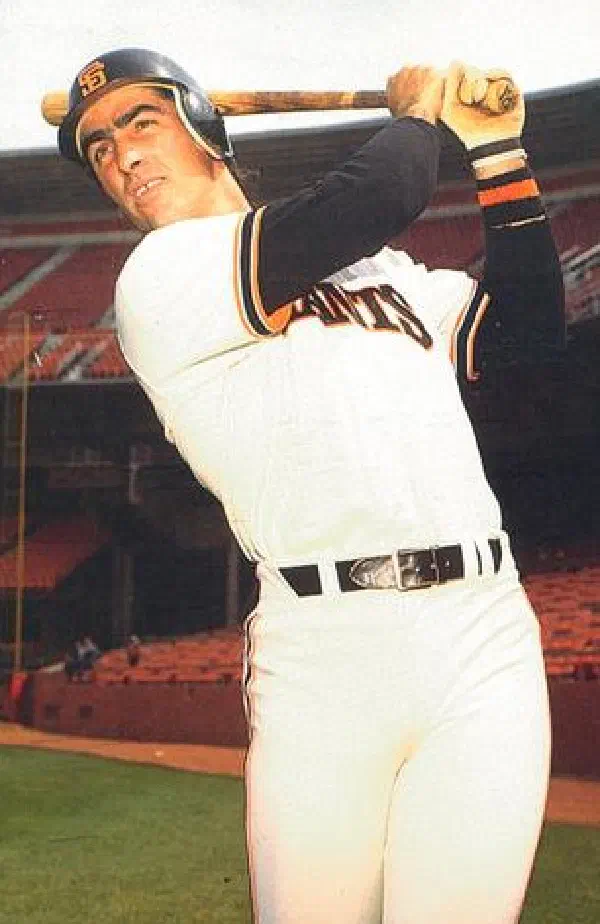

Jack Clark

Profile

Jack Clark is the co-founder and Head of Policy of Anthropic, the AI safety company behind Claude. He’s one of the most influential voices bridging frontier AI research and public policy — the person who walks into Senate hearings, UN Security Council briefings, and White House meetings to explain what’s actually happening inside the labs. If you want to understand how AI policy gets shaped in Washington, Brussels, and the OECD, Clark is one of a handful of people who keeps turning up in the room.

Before Anthropic, Clark was Policy Director at OpenAI, where he joined in 2016 after a career as a tech journalist at Bloomberg and The Register. That journalist background is his superpower: he can read a paper, spot what matters, and write about it for humans. He left OpenAI in 2021 with Dario Amodei, Daniela Amodei, Chris Olah, Jared Kaplan, and others to start Anthropic — a move that reshaped the frontier lab landscape.

What makes Clark essential reading for developers is Import AI, his weekly Substack newsletter running since 2016 with ~70,000 subscribers. It’s three things stitched together: a digest of the week’s significant research papers, a policy analysis thread, and a short piece of speculative fiction. Nothing else on the internet covers AI with that mix of technical depth, geopolitical framing, and literary imagination. If you read one AI newsletter, this is the one working researchers and policy people actually read.

Clark was a founding member of the Stanford HAI AI Index (2017–2024), served on the inaugural US National AI Advisory Committee, co-chairs the OECD working group on AI system classification, and has testified repeatedly before Congress. In March 2026 he launched the Anthropic Institute, the company’s policy and governance research division. He’s opinionated and increasingly blunt — his October 2025 talk “Technological Optimism and Appropriate Fear” argued that AI researchers including himself are genuinely scared of what they’re building, and that pretending otherwise is a policy failure.

Key Articles & Papers

Import AI 431: Technological Optimism and Appropriate Fear Import AI newsletter Import AI on Substack The Malicious Use of Artificial Intelligence Release Strategies and the Social Impacts of Language Models Stanford AI Index Report Written Testimony before the House Science Committee UN Security Council briefing on AIVideos

YouTube

Spotify Podcasts

![[5/21 05:00] Anthropic co-founder Jack Clark Nobel Prize prediction / JPMorgan CEO Jamie Dimon AI hiring expansion](/images/people/jack-clark/spotify/7H1OJ5RNhvbFL8XNxbbFDR_hu_2df88014c89504bd.webp)