Apple AI research director, Torch co-creator, former Google Brain

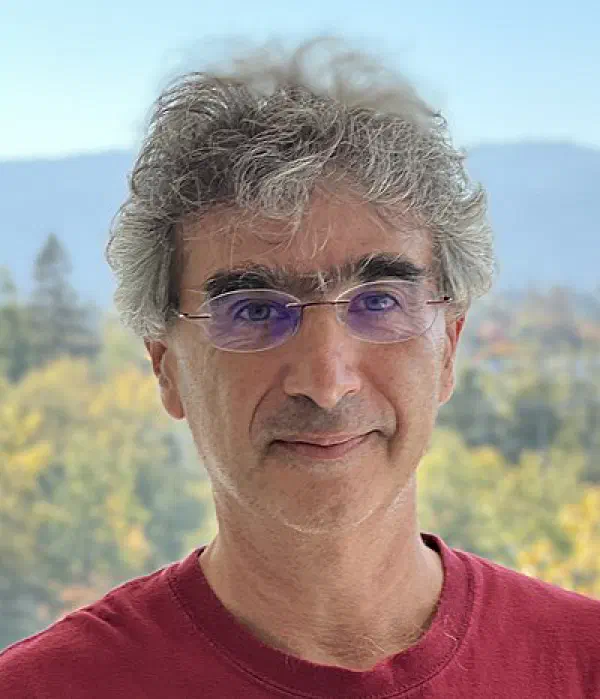

Samy Bengio

Profile

Samy Bengio is the Senior Director of AI and Machine Learning Research at Apple, where he leads a large research organization whose work now ships inside iPhones, iPads, and Macs as the foundation of Apple Intelligence. Before Apple, he spent 14 years helping build Google Brain from the inside — one of the early scientific leaders of the team that turned deep learning from an academic curiosity into the engine of modern AI. He earned his Ph.D. from the Université de Montréal in 1993, and yes, he is the younger brother of Yoshua Bengio — though he has built a serious body of his own work, with roughly 250 papers and over 100,000 citations.

His Google-era fingerprints are on some of the papers developers still quote today. Show and Tell, co-authored with Oriol Vinyals, was one of the first end-to-end neural image captioning systems. Scheduled Sampling gave sequence-to-sequence models a way to survive their own generation errors during training. And Understanding deep learning requires rethinking generalization forced the field to admit that nobody actually knew why over-parameterized networks worked — a question still not fully settled a decade later. He was NeurIPS program chair in 2017 and general chair in 2018, which is about as central to the community as you can get without running a lab of your own.

He left Google in 2021 in the fallout from the firings of Timnit Gebru and Margaret Mitchell, both of whom reported to him. Apple picked him up within weeks, and the hire landed as a clear signal: Apple, long seen as the sleepiest of the big AI labs, was finally playing for research talent. His group now publishes aggressively at NeurIPS, ICLR, and ICML while also feeding directly into Apple’s on-device and server foundation models. He’s also an adjunct professor at EPFL.

What makes him worth following for anyone building with AI: his team sits at the unusual intersection of serious academic research and constrained, ship-it product engineering. Apple cannot run a 400B-parameter model on a phone, so his researchers have to care about efficiency, distillation, and what models actually do — not just what they score on benchmarks. His 2025 paper The Illusion of Thinking, which picked apart the reasoning claims of frontier models using controlled puzzle environments, was the most-discussed AI paper of its quarter and a rare case of a big-tech lab publicly poking holes in the dominant narrative. That’s the flavor of work to expect from him.

Key Articles & Papers

The Illusion of Thinking: Understanding the Strengths and Limitations of Reasoning Models via the Lens of Problem Complexity GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models How Far Can Transformers Reason? The Globality Barrier and Inductive Scratchpad Understanding Deep Learning Requires Rethinking Generalization Show and Tell: A Neural Image Caption Generator Scheduled Sampling for Sequence Prediction with Recurrent Neural Networks Adversarial Examples in the Physical World Apple Intelligence Foundation Language Models Transformers Learn Through Gradual Rank IncreaseYouTube

Spotify Podcasts