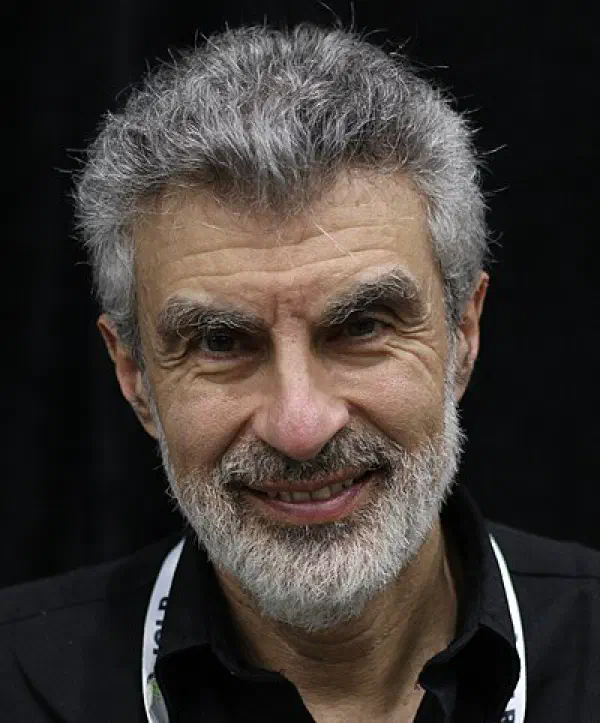

AI safety advocate, LawZero co-president and MILA founder

Yoshua Bengio

Profile

Yoshua Bengio is the quiet third of the “Godfathers of AI” — less publicly visible than Geoffrey Hinton or Yann LeCun, but arguably the most academically prolific of the trio. He shared the 2018 Turing Award with them for work that turned neural networks from a fringe idea into the foundation of modern AI. While Hinton went to Google and LeCun to Meta, Bengio stayed in academia at the Université de Montréal and built MILA into one of the densest concentrations of deep learning talent on the planet.

His research fingerprints are on almost everything developers touch today. His 2003 paper on neural probabilistic language models laid the groundwork for word embeddings and, eventually, transformers. He co-authored the attention mechanism paper that made neural machine translation work — the same attention idea Ashish Vaswani and colleagues later scaled into “Attention Is All You Need.” He co-invented generative adversarial networks with Ian Goodfellow, then his PhD student. If you learned deep learning from a textbook, it was probably his: Deep Learning, co-written with Goodfellow and Aaron Courville, is still the standard reference. His brother Samy Bengio runs ML research at Apple — a family business, apparently.

Then, around 2023, Bengio did something that surprised people: he publicly pivoted to AI safety. He signed the Future of Life Institute’s “pause” letter, started warning about loss-of-control scenarios, and took on chairing the International AI Safety Report — the closest thing AI has to an IPCC-style consensus document. In 2025 he launched LawZero, a nonprofit researching “Scientist AI” — systems designed to be non-agentic and truthful by construction. Unlike Eliezer Yudkowsky he’s not predicting doom, and unlike LeCun he’s not dismissing the risk. He’s trying to work the problem.

For developers learning AI, Bengio is worth paying attention to for a specific reason: his trajectory from pure capability research to safety research mirrors a journey a lot of thoughtful builders end up making. He doesn’t do social media theater. He publishes, he teaches, he builds institutions. When someone who co-invented modern deep learning says “we should slow down and understand what we’re building,” that’s signal, not noise.

Books

Key Articles & Papers

A Neural Probabilistic Language Model Learning Long-Term Dependencies with Gradient Descent is Difficult Neural Machine Translation by Jointly Learning to Align and Translate Generative Adversarial Nets Representation Learning: A Review and New Perspectives Greedy Layer-Wise Training of Deep Networks Managing extreme AI risks amid rapid progress International AI Safety Report Superintelligent Agents Pose Catastrophic Risks: Can Scientist AI Offer a Safer Path? FAQ on Catastrophic AI RisksYouTube

Spotify Podcasts