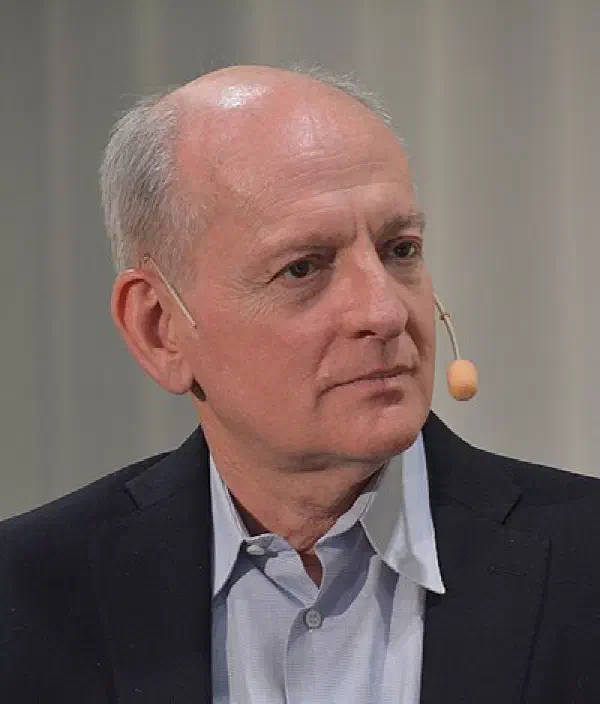

UC Berkeley Distinguished Professor, AI safety and governance

Stuart Russell

Profile

If you took an AI course at any university in the last twenty years, you almost certainly learned from Stuart Russell. His textbook with Peter Norvig — Artificial Intelligence: A Modern Approach — is the canonical introduction to the field, used at over 1500 universities worldwide and now in its fourth edition. It’s the book that teaches search, planning, knowledge representation, learning, and (increasingly in later editions) safety. Russell is the Smith-Zadeh Professor of Engineering at UC Berkeley, where he’s been on the faculty since 1986.

Then he wrote himself out of the consensus. Around the mid-2010s, Russell became one of the most credentialed voices arguing that the standard model of AI — building agents that optimize a fixed objective — is fundamentally broken at scale. Get the objective slightly wrong and a sufficiently capable optimizer produces catastrophe. His 2019 book Human Compatible lays out the alternative: build machines that are explicitly uncertain about what humans want, and that defer to humans accordingly. He calls it provably beneficial AI, and in 2016 he founded the Center for Human-Compatible AI (CHAI) at Berkeley to pursue it.

Russell matters because he’s the rare AI safety voice that can’t be dismissed as an outsider or doomer crank. He literally wrote the textbook. When he co-produced the Slaughterbots video and screened it at the UN to argue for a ban on lethal autonomous weapons, governments listened. When he gave the BBC Reith Lectures in 2021, they were called “Living with Artificial Intelligence.” His arguments overlap with Eliezer Yudkowsky and Max Tegmark but land differently — measured, technical, and grounded in the same formalism his textbook uses to teach the next generation.

In 2025 he was elected to both the Royal Society and the U.S. National Academy of Engineering, and he convened the inaugural meeting of the International Association for Safe and Ethical AI in Paris. For developers building with AI today, Russell’s work is the bridge between “here’s how reinforcement learning works” and “here’s why optimizing the wrong objective is the central engineering problem of our era.” Read the textbook to learn AI; read Human Compatible to learn why Russell now thinks his own field has been pointed in the wrong direction.

Books

Key Articles & Papers

Provably Beneficial Artificial Intelligence Research Priorities for Robust and Beneficial AI The Long-Term Future of (Artificial) Intelligence Lethal Autonomous Weapons Systems Cooperative Inverse Reinforcement Learning The AI Race Is About to Get Even More DangerousVideos

Controversies

Russell’s existential-risk arguments have drawn pushback from peers like Yann LeCun, who has publicly dismissed near-term AGI risk concerns as overblown and counterproductive to research. Russell, in turn, has been blunt about what he sees as the field’s failure to take its own creations seriously. The disagreement is genuine and ongoing — and worth understanding, since both sides are arguing from inside the field rather than outside it.

He has also been criticized from the other direction by AI ethics researchers who argue that focus on long-term existential risk distracts from concrete present-day harms (bias, surveillance, labor displacement). Russell’s response has generally been that both matter and the framing is a false choice.

YouTube

Spotify Podcasts